P3502

Evaluation Domains: An Overview

Evaluation has always been a part of Extension programming. However, most people view it as a necessary evil of the reporting process rather than as an opportunity to identify, document, and celebrate accomplishments. As competition for funding for nonformal education programs increases, demonstrating Extension’s programmatic successes is essential to maintaining funding and remaining relevant to citizens. While decisions about which programs and organizations to fund are often based on demonstrated outcomes and impacts, the evaluation process must occur before and during program implementation to have the greatest chance of being able to demonstrate outcomes after the program has ended.

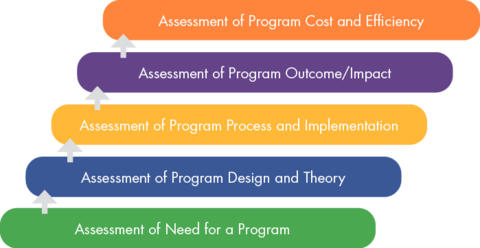

Many terms are used to refer to different evaluation-related activities. You may have heard terms such as needs assessment, theory of change, logic model, formative evaluation, summative evaluation, impact evaluation, and cost-benefit analysis. These and many other evaluation terms fall into five domains of evaluation as described by Rossi, Lipsey, and Henry (2019). The domains identify the types of evaluation that can be conducted.

Five Domains of Evaluation

Starting from the bottom, each domain builds upon the previous domain. Ideally, you would not be designing and implementing a program unless a need for a program had been identified. You would also not be able to assess program outcomes without implementing a program. It would be inappropriate to conduct an efficiency assessment without establishing that a program achieved its desired outcomes.

Domain 1: Assessment of Need for a Program

The first evaluation domain is needs assessment. What is a need? A need is defined as the gap between “what is” and “what should be” (Witkin & Altschuld, 1995). There are different sources of information that we can use to determine needs, such as talking with potential target audiences or knowledgeable informants, or even reviewing existing data and statistics.

For example, many low-income families struggle with debt, which can create a great deal of stress and other problems.

A needs assessment helps us determine whether there is a need for a program to address this issue and is typically conducted before implementing a program.

The overarching evaluation question in this domain asks:

Is there a need for this type of program in this context?

Domain 2: Assessment of Program Design and Theory

The second evaluation domain is theory assessment. A theory of change identifies components needed to accomplish a program’s goal (Center for Theory of Change, 2013), while a logic model presents those components in a visual format to show the relationships between activities and outcomes (Taylor-Powell & Henert, 2008). We use theory and research to determine what content should be delivered and the most effective ways to deliver it. Before implementing a program, we need to make sure the conceptualization and design of the program reflect valid assumptions about the problem and a feasible approach to resolving it.

For example, in reviewing theory and research, we determine that one promising approach to helping families with debt is providing education and hands-on practice related to developing a budget.

Therefore, we develop a curriculum for delivering an educational program that includes both content information and exercises.

The overarching evaluation question in this domain asks:

Is the program conceptualized in a way that it should work?

Domain 3: Assessment of Program Process and Implementation

The third evaluation domain is implementation assessment, or process evaluation. This domain focuses on the program’s operation and delivery, or its process, to determine whether a program was delivered in the way it was intended.

For example, we could assess how well the hands-on budgeting activities matched the program’s objectives, if we reached the desired target audience, and whether the activities were delivered as described in the curriculum.

This example shows that process evaluation is useful for refining or improving a program; for this reason, it is also called formative evaluation.

The overarching evaluation question in this domain asks:

Was this program implemented properly and according to the plan?

Domain 4: Assessment of Program Outcome and/or Impact

The fourth evaluation domain is outcome and impact assessment. An outcome refers to a change in knowledge, attitudes, skills, behaviors, practices, or conditions (Taylor-Powell & Henert, 2008). Impact refers to determining whether it was the program being evaluated that caused the outcomes documented. Outcome and impact assessment require clearly defined program objectives that identify how much change is expected, in what area, by when, and for whom.

For example, outcome assessment helps us determine if we reached our goal of 80 percent of program participants increasing their knowledge and skills related to developing a budget.

These assessments, also called summative evaluation, are conducted after the program is completed and often for the benefit of some external audience or decision-makers.

The overarching evaluation question in this domain asks:

Did this program achieve its desired outcomes and have an impact on its intended targets?

Domain 5: Assessment of Program Cost and Efficiency

The final evaluation domain is efficiency assessment. This domain focuses on whether the benefits of the program justify the costs.

For example, did the outcomes that resulted from our budgeting program outweigh the costs of providing it?

There are various types of efficiency assessment. Two common analyses are cost-benefit analysis and cost-effectiveness analysis. While a form of efficiency assessment can be done before a program is implemented to determine its economic feasibility, full-scale efficiency assessment is typically conducted after you have evidence of program outcomes. Conducting efficiency assessment typically requires involvement from an economist who has expertise in these techniques.

The overarching evaluation question in this domain asks:

Is the program cost-effective?

Conclusion

The evaluation domain hierarchy shows that program development and evaluation go hand-in-hand. Evaluation findings in one domain can inform programmatic and evaluation decisions in subsequent domains. Knowing the types of evaluation that can be conducted and the overarching question that is answered in each domain can help Extension professionals identify appropriate evaluation techniques and tools. Evaluation occurs throughout every phase of a program— before, during, and after implementation.

References

Center for Theory of Change. (2013). What is theory of change?

Rossi, P. H., Lipsey, M. W., & Henry, G. T. (2019). Evaluation: A systematic approach (8th ed.). Sage Publications.

Taylor-Powell, E., & Henert, E. (2008). Developing a logic model: Teaching and training guide. University of Wisconsin Extension, Program Development and Evaluation.

Witkin, B. R., & Altschuld, J. W. (1995). Planning and conducting needs assessments: A practical guide. Sage Publications.

Publication 3502 (POD-01-24)

By Donna J. Peterson, PhD, Associate Extension Professor; Laura H. Downey, DrPH, MCHES, Associate Extension Professor; and Alisha M. Hardman, PhD, CFLE, Assistant Professor, Human Sciences.

The Mississippi State University Extension Service is working to ensure all web content is accessible to all users. If you need assistance accessing any of our content, please email the webteam or call 662-325-2262.

Authors

-

Interim Director, Ext Prof, &

Interim Director, Ext Prof, &- MSU - ES Administration